Alexander Wissel, Executive Editor

It’s worth remembering that when most people say “AI,” they’re not actually talking about artificial intelligence in the classical sense. What most users are interacting with today are LLMs – large language models.

Tools like ChatGPT or Gemini may feel new and disruptive, but versions of this technology have been embedded in daily life for years. Every time Gmail finished your sentence or your phone predicted the next word, you were in fact engaging with an early form of an LLM – testing it, shaping it, and quietly training it.

What’s changed is scale and capability. These systems are now sophisticated enough to communicate conversationally, reason through multi-step problems, and slot directly into professional workflows.

Used well, they are genuinely powerful productivity tools…

Used carelessly, they’re a risk.

As a general rule, if your work can be fully replicated by an LLM with little oversight or judgment, it’s reasonable to assume that one day it will be. That has implications not just for tasks, but for careers.

True artificial intelligence, often referred to as AGI – artificial general intelligence – is something different entirely. AGI implies independent reasoning, agency, and the ability to solve novel problems in a human-like (or superior) way.

Despite the rhetoric and speculation, there’s no credible evidence that we’ve crossed that threshold. For now, AGI remains a theoretical milestone rather than an operational reality – but it’s one that continues to shape how serious this moment in history is.

But let’s get back to the AI programs that we have now, and not the potential programs they could be.

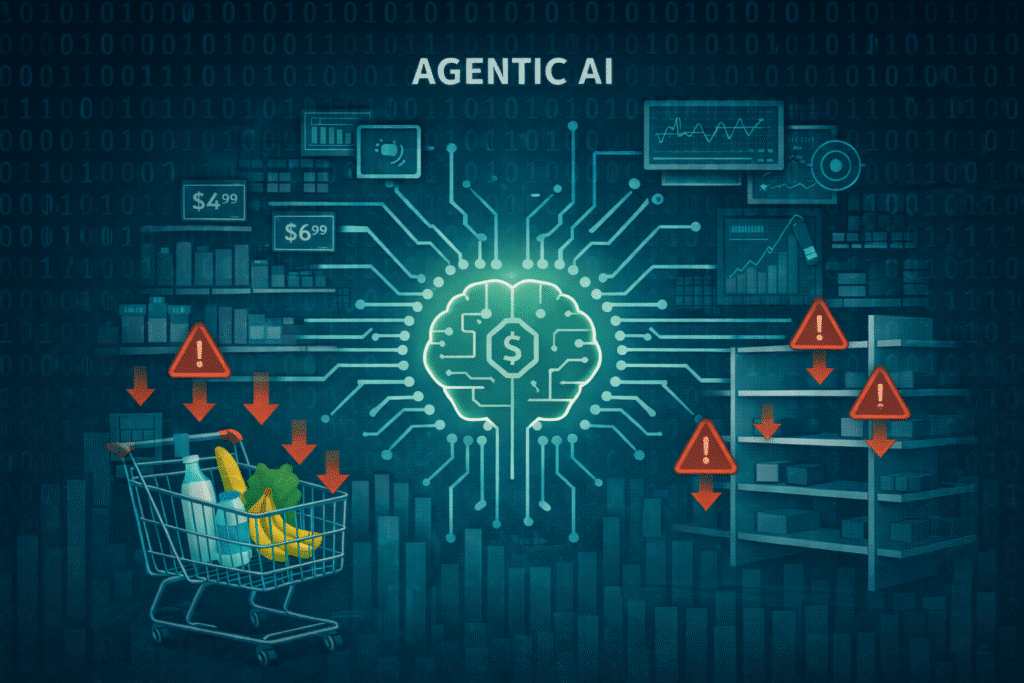

Agentic AI in Practice

At its simplest, AI agents are programs that combine the intelligence of advanced AI models with access to tools so they can take actions on your behalf. Generally we are talking about multi-step tasks in lieu of a simple query like: “Tell me today’s temperature.”

Instead of searching Google for flights from say snowy New York City to Miami today. You task an AI “agent” with procuring a ticket for you. The instructions would sound like this: “AI bot, please buy me a plane ticket from New York to Miami at the best price possible in the next two days. And I want to leave between 10 AM and 4 PM.”

The AI agent would search online for the best available price on all the airlines leaving from New York and arriving in Miami. Using your information and credit card (the one you’ve already provided to your agent) it would purchase the flight.

Mission accomplished, right? If you’ve dealt with a teenager maybe you know how giving simple and specific task instructions can still go horribly wrong.

I didn’t tell the agent whether I needed special help, or if I had a rewards account or airline miles to use. I didn’t specify baggage counts, or to look for flights that had free baggage…

I didn’t actually specify the airport I was leaving from. New York City? Or anywhere in New York state? If I expected to leave from La Guardia and now I’m at JFK, that might be a minor inconvenience. But what if it booked me out of Syracuse in upstate New York instead? And you’ll note I didn’t specify whether I wanted refundable tickets.

AI agents are complicated programs designed to complete tasks – or a sequence of tasks – to achieve a specific, stated goal. The quality of the instruction we give it will determine the quality and the details of our output. GIGO – garbage in, garbage out.

In the same way, when I tell my teenager to take the trash out, but never specify when it needs to be taken out, technically if he plans to do it “in the future” he’s still “doing what I told him”… despite clearly not. I never told him it needed to be done today. My mistake.

Our successes and failures with using AI and agentic AI will basically come down to communication in much the same way..

So now you can see the benefits – and the potential pitfalls – of a consumer asking its AI Agent to create a shopping list and tasking it to: order, purchase, and arrange for it for delivery. It’s easy, frictionless, and clearly a huge opportunity for retailers. And an opportunity for problems as well.

The Use Case for Agentic AI in Grocery

As with any major innovation, business adoption comes in fits and starts. The overworked and understaffed grocery industry is eyeing the use of AI agents for all sorts of solutions. With their larger margins and marketing budgets, the CPG industry is already aggressively using agentic AI.

In companies that use AI, 51% of those retail and CPG executives use AI in production and deploy them in a wide range of use cases. Of those, 39% use them for quality control, 38% use them for supply chain and logistics, and 32% use them for digital fraud detection.

For this new paradigm, the goal isn’t to augment the mundane tasks, but rather to orchestrate a team of specialized AI agents. This work relationship is for everyone; from executives to entry-level employees.

When Google Cloud polled retail and CPG executives for their 2026 AI Agent Trends report, they found 37% had deployed more than 10 agents.

The future of these programs is wide open and we have just begun to explore the use cases or agentic AI. The takeaway here is that, while you don’t need to rush in to convert your entire business to AI, you need to be aware and proactive to implement these technological updates.